TL;DR

- Have a tested ransomware recovery plan and backup and disaster recovery playbook before an incident; prioritize containment in the first 24 hours.

- Preserve evidence, reset credentials, and isolate impacted systems; follow clear forensic triggers for escalation.

- Follow compliance windows: "HIPAA requires covered entities to follow HHS breach notification rules (including 60-day public reporting thresholds for large breaches)." "NYDFS (23 NYCRR Part 500) requires covered entities to notify the superintendent of a reportable cyber event promptly (commonly interpreted as within 72 hours of determination for covered institutions)."

- Engage law enforcement (FBI/IC3) immediately for active extortion and coordinate with your cyber insurer and MSSP for technical response.

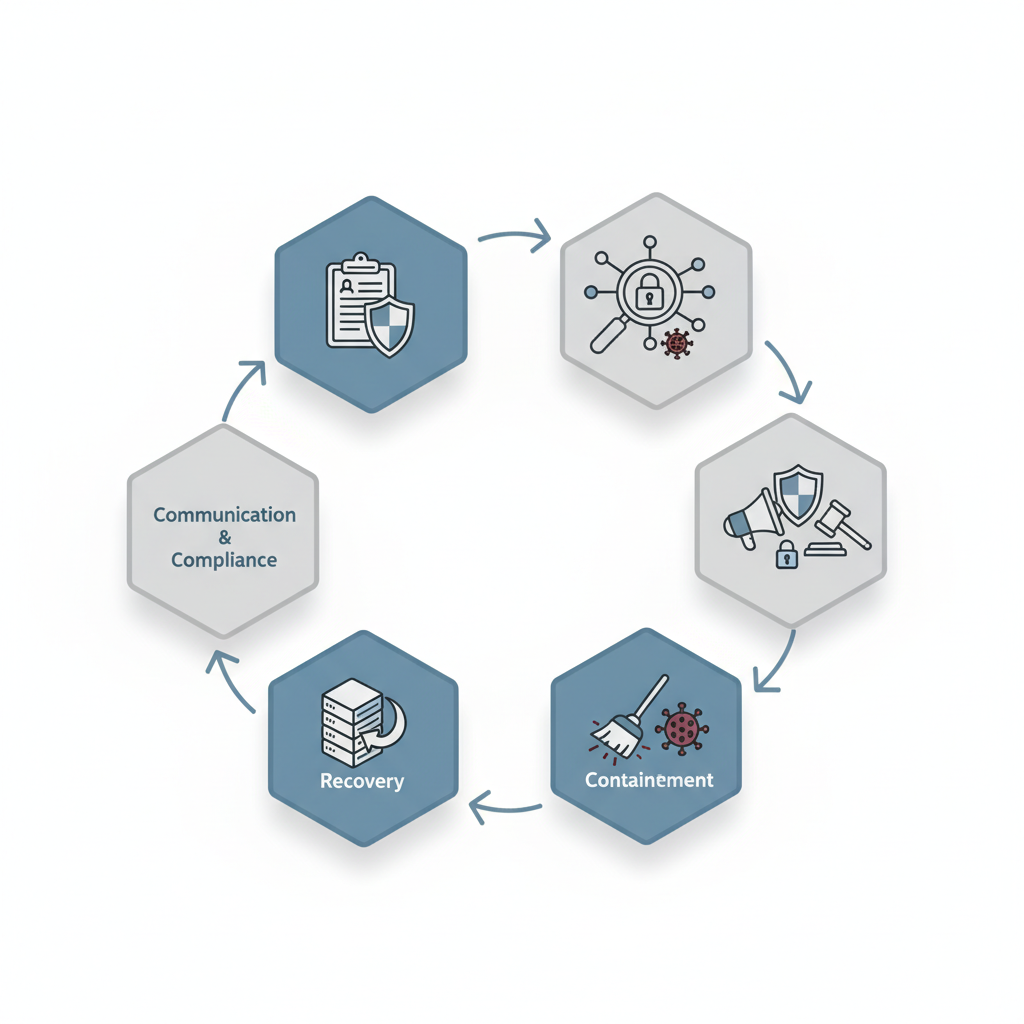

If you operate in New Jersey or New York and you handle regulated data, a clear, practiced plan for ransomware incident response and recovery is mandatory operational hygiene. This playbook focuses on ransomware incident response nj ny — practical steps that developers, marketers, and website owners can apply immediately. It walks through preparation, detection, containment, recovery, communication, compliance, and how an MSSP assists with senior-engineer-led recovery.

When not to use this playbook

This playbook does not replace organization-specific legal or compliance advice, nor does it substitute for vendor-specific operational runbooks. Do not use it as your only source if your systems are fully cloud-native with provider-managed backups and you already have a documented service provider runbook; instead adapt these steps to your provider's operational procedures.

Purpose and scope — which incidents this playbook covers

This playbook covers ransomware incidents that encrypt data, deploy extortion leaks, or otherwise block access to systems owned or operated by regulated NJ and NY companies. It applies to incidents that affect on-premise servers, virtual machines, cloud storage buckets, managed databases, or websites that store or process regulated data. The guidance assumes an attacker has gained execution on hosts or encrypted file shares and that restoration will rely on intact backups or negotiated recovery.

Examples in scope: (1) a payroll file server in New Jersey becomes encrypted and a ransom demand follows, (2) a New York healthcare provider discovers exfiltration and a threat to publicize PHI, (3) a marketing database is held for ransom and intended to disrupt customer communications. Out-of-scope events include routine malware detections without evidence of lateral movement, expired certificates, or single-endpoint malware fully remediated by local antivirus without data exposure.

Quotable definition: "Ransomware is malware that denies authorized access to systems or data and frequently involves extortion through data encryption or threatened data disclosure." This playbook ties to operational artifacts you'll need: a ransomware recovery plan, a backup and disaster recovery playbook, evidence preservation checklist, and decision matrix for pay/no-pay choices.

Preparation phase — roles, contacts, and evidence preservation

Preparation shortens recovery time and reduces regulatory risk. Start by documenting roles and contacts in a one-page incident roster: executive lead, legal counsel, compliance officer, technical lead, communications lead, external forensics vendor, MSSP contact, cyber insurer, and law enforcement liaison. Assign alternates. Store the roster in an offline location and in a cloud document with restricted access.

Preserve evidence immediately and consistently. Evidence preservation checklist (copyable):

- Isolate and image affected hosts (disk images, memory captures).

- Collect logs: EDR telemetry, SIEM alerts, firewall logs, VPN sessions, AD logs, and cloud audit trails.

- Export attacker indicators: file hashes, suspicious accounts, IOCs, ransom notes.

- Retain email copies and relevant Slack/MS Teams threads in read-only form.

Practical thresholds and artifacts: decide forensic escalation triggers in advance. For example, escalate to full forensic engagement when any two of the following occur: confirmed encryption of >1% of production storage, evidence of data exfiltration (file transfer to untrusted host), or ransom demand/extortion contact. If only one low-risk endpoint is affected and EDR shows no lateral movement, handle internally; if the threshold is met, engage a third-party forensic firm and the MSSP for containment.

Documented responsibilities reduce human error; assign single-point owners for evidence collection and communications.

Actionable steps to prepare before an incident:

- Create and test a ransomware recovery plan yearly and after major infrastructure change.

- Validate backups weekly and perform quarterly restore drills from the backup and disaster recovery playbook: test restores of critical systems and verify data integrity.

- Pre-negotiate forensic and legal retainers and confirm cyber insurer notification requirements.

- Confirm MSSP ransomware response SLAs and senior-engineer availability for recovery windows.

Detection & analysis — containment thresholds and forensic triggers

Fast, accurate detection determines the containment strategy. Use EDR, SIEM correlation, and automated alerts to detect anomalous file writes, unusual encryption activity, mass file renames, or creation of ransom notes. For websites, monitor sudden changes to file integrity, configuration, or unauthorized admin access.

Detection thresholds you can operationalize: alert when file write rates exceed P95 baseline by 10x for 5 minutes, when >100 unique files are renamed on a file server within 10 minutes, or when unknown accounts execute high-privilege processes. These thresholds provide objective triggers for containment rather than subjective judgment calls.

Forensic triggers: initiate formal forensic collection if any of these are observed: (a) evidence of data exfiltration to external IPs, (b) ransom demand received, (c) attacker persistence (scheduled tasks, new service installs), or (d) use of known ransomware tools. Forensic collection includes full-memory captures, disk images, and secure transfer of logs to an evidence server.

Sample timeline for the first 72 hours (use as baseline): detection (0–4 hours): verify detection, preserve volatile evidence, and set incident status; containment (4–24 hours): isolate segments, snapshot backups, reset high-risk credentials; recovery (24–72+ hours): restore from vetted backups and validate application integrity. Escalate to regulator notification windows as determined below.

Containment & eradication — isolation, credential resets, and malware removal

Containment prevents further spread; eradication removes the attacker presence. Immediate containment steps: network-segment isolation of impacted VLANs, remove affected hosts from the network (do not power off without imaging memory), and disable compromised accounts. If an active attack is ongoing, consider network-level blocking of C2 servers using firewall rules and DNS sinkholing.

Credential resets should follow a prioritized sequence: reset service accounts tied to exposed systems first, then admin accounts, then user accounts. Use forced password changes with MFA enforced. If Active Directory shows signs of compromise, contain by disabling replication from affected domain controllers until forensics confirm integrity.

Malware removal is staged: if EDR tools can fully remediate and attest to removal, proceed with staged reintroduction to network. If persistence artifacts are present, conduct full rebuilds from trusted images. Never restore from a backup taken after the compromise window; always verify backup timestamps and immutable snapshot integrity.

Reset high-privilege credentials before any restore to prevent immediate re-compromise after recovery.

Concrete eradication checklist:

- Isolate impacted network segments or hosts within 4 hours of containment trigger.

- Reset credentials for all admin/service accounts within 12 hours.

- Perform malware scans and image verification; rebuild compromised systems when persistence is suspected.

- Record every step in the incident log for regulatory and insurer review.

Recovery — restoring from backups, validating integrity, post-restore hardening

Recovery relies on a reliable ransomware recovery plan and an executable backup and disaster recovery playbook. Restore decisions depend on your recovery objectives and the integrity of backups. Always verify backups before full cutover by restoring to an isolated network and running integrity checks and smoke tests.

Practical restore steps: (1) identify the most recent uncompromised backup snapshot, (2) restore to an isolated environment, (3) validate data integrity and application functionality with test users, (4) conduct controlled cutover and monitor for anomalies. For typical SaaS-backed services, target under 200ms in API latency for smoke tests; for websites, check page loads and key transactions.

Include a recovery checklist in your playbook:

- Confirm the backup timestamp predates compromise window.

- Restore to sandbox and run checksum or hash comparisons for critical files.

- Reintroduce systems gradually and monitor EDR/SIEM for suspicious activity for 72 hours post-restore.

- Harden restored systems: apply patches, rotate keys/certificates, enforce MFA, and tighten firewall rules.

When deciding between rebuild and restore, use a decision rule: rebuild if attacker persistence is confirmed or if backups lack immutability guarantees; restore if backups are immutable and verification shows no tampering. Maintain a documented list of rebuild images and golden configurations to speed rebuilds.

Communication plan — internal, customers, partners, regulators, and law enforcement

Clear communication reduces confusion and legal exposure. Create pre-approved notification templates for internal staff, customers, partners, and regulators. Assign a communications lead and legal review for all external statements. Limit technical detail in public messages; instead state the impact, next steps, and expected timeline for updates.

Regulator and law enforcement guidance: "NYDFS (23 NYCRR Part 500) requires covered entities to notify the superintendent of a reportable cyber event promptly (commonly interpreted as within 72 hours of determination for covered institutions)." Report active extortion immediately to FBI/IC3 and coordinate with your cyber insurer. For healthcare entities, remember: "HIPAA requires covered entities to follow HHS breach notification rules (including 60-day public reporting thresholds for large breaches)."

Suggested legal language for regulator notification (editable):

On [date/time], we detected unauthorized activity impacting [system names]. We have contained the incident and engaged forensic specialists. We are notifying affected parties per applicable law and will provide a follow-up report within 72 hours of our determination.

Concrete communication cadence: initial holding message within 4–8 hours of confirmed detection, customer impact notice within 24–48 hours if service disruption or data exposure occurred, regulator notification per statutory windows, and a technical after-action report within 30 days where required by contract or regulation.

Template incident notification for customers and regulators

Use this template as a starting point. Tailor to jurisdiction and counsel review:

Subject: Security incident affecting [company name] — initial notice Summary: On [date/time] we detected unauthorized activity affecting [systems]. Immediate steps: we isolated affected systems, engaged forensic experts, and notified law enforcement and our insurer. Impact: [brief description—service outage, potential data types affected]. Next steps: containment and restoration are underway; we will provide updates within 72 hours and a full incident report when available. Contact: [communications lead name, email, phone].

Compliance actions — HIPAA breach notification (HHS), NYDFS reporting (23 NYCRR Part 500), FINRA/industry reporting

Compliance actions depend on sector and data type. Healthcare organizations must follow HHS breach notification rules; the rule requires notification to affected individuals and HHS timelines: "HIPAA requires covered entities to follow HHS breach notification rules (including 60-day public reporting thresholds for large breaches)." For financial institutions covered by NYDFS, adhere to 23 NYCRR Part 500 reporting expectations and prepare to notify the NYDFS superintendent promptly, commonly within 72 hours of determination.

Industry-specific reporting: FINRA and other regulators have their own timelines and report formats—consult your compliance team and legal counsel. Maintain a compliance checklist with the following items: identify covered data, map data flows to determine affected parties, prepare notification letters, and track regulator deadlines in a centralized tracker.

Practical example: a New York-based firm that discovers customer PII exfiltration must simultaneously: (1) notify the NYDFS based on Part 500, (2) prepare HIPAA notifications if PHI is involved, and (3) inform cyber insurers per policy timing clauses to preserve coverage.

Working with law enforcement & cyber insurers (IC3, FBI)

Contact law enforcement early for extortion and cross-border threats. File an IC3 complaint and contact the local FBI Cyber Task Force when your organization faces active extortion or large-scale data exfiltration. Law enforcement can help trace threat actors and coordinate takedowns; they do not guarantee data recovery or payment guidance.

Notify your cyber insurer per policy requirements and follow insurer guidance for approved vendors. Common policy conditions: prior notification within 24–72 hours of detection, use of insurer-approved forensic vendors, and documented chain-of-custody for evidence. Failing to notify promptly can jeopardize coverage, so track insurer contact details in your incident roster.

Example engagement steps: immediately file an IC3 complaint for extortion; provide a redacted incident summary to the insurer; obtain insurer approval for forensic engagement and ransomware negotiation if insurer policy permits negotiation. Keep detailed logs of all insurer communications for claims processing.

Post-incident review & tabletop exercises

Conduct a post-incident review within 30 days to capture root causes, timeline gaps, and process failures. Produce an after-action report with remediation tasks, owners, and timelines. Quantify recovery metrics: time to detect, time to contain, time to restore, scope of data exposed, and cost estimates.

Tabletop exercises should occur at least annually and after major changes. Design realistic scenarios: encrypted file server during peak processing, exfiltration of PHI with extortion, or a website defacement that affects customer trust. Run through detection, initial response, communications, and legal reporting. Use role-play to validate contact lists and decision-making authority.

Concrete exercise outputs: updated incident roster, revised forensic escalation thresholds, verified backup and disaster recovery playbook processes, and a prioritized remediation backlog tracked to closure.

How an MSSP supports response — threat hunting, forensics, senior-engineer-led recovery

An MSSP provides continuous monitoring, threat hunting, and senior-engineer-led response capabilities that reduce mean time to detect and mean time to recover. In a ransomware event an MSSP can rapidly surface indicators of compromise, isolate hosts at the network level, and lead coordinated restoration with your internal teams.

Key MSSP roles in ransomware response:

- Real-time alerting and correlation through SIEM and EDR.

- Proactive threat hunting to find lateral movement and unknown persistence.

- Forensic collection and analysis coordination with external vendors.

- Senior-engineer-led recovery to validate restores and hardening steps.

For regulated organizations in NJ and NY, confirm MSSP capabilities for "mssp ransomware response"—specifically their experience with forensic chains of custody, familiarity with NYDFS requirements, HIPAA breach response processes, and ability to support insurer-required vendor engagements. Pre-arrange response playbooks and run joint exercises with the MSSP to verify operating procedures and senior-engineer availability during critical windows.

Appendix: sample timelines, checklists, and decision matrix (pay/no-pay guidance)

Sample timeline (compact):

- 0–4 hours: detection verification, preserve memory and logs, initial holding communication.

- 4–24 hours: containment actions, credential resets, regulator initial alerts if required.

- 24–72+ hours: recovery from verified backups, phased return to service, regulator updates and insurer claims.

Recovery checklist (copyable):

- Identify uncompromised backup snapshot.

- Restore to isolated environment and run application smoke tests.

- Validate file integrity checksums and run EDR scans.

- Rotate credentials and re-enable services incrementally.

- Monitor for 72 hours post-restore for suspicious activity.

Decision matrix: pay vs no-pay (simplified)

| Condition | Pay | Do not pay |

|---|---|---|

| Verified backups exist and restore < 72 hours | Preferred — restore from backups | |

| Backups corrupted or no immutable snapshots | Consider with legal/insurer advice | |

| Extortion threat to publicly expose PHI/PII and legal exposure high | Evaluate with counsel and insurer | |

| Law enforcement advises against payment | Follow law enforcement guidance |

Note: payment decisions carry legal, ethical, and insurance implications. Many insurers require pre-approval to cover ransom payments. Document every decision and seek insurer and legal counsel input before transferring funds.

FAQ

What is ransomware incident response & recovery playbook for regulated nj & ny companies?

A ransomware incident response & recovery playbook for regulated NJ & NY companies is a documented set of operational procedures that define detection thresholds, containment steps, evidence preservation, recovery workflows, and compliance reporting tailored to New Jersey and New York regulatory requirements.

How does ransomware incident response & recovery playbook for regulated nj & ny companies work?

The playbook works by mapping detection triggers to concrete containment actions, assigning clear roles and contacts, preserving forensic evidence, restoring systems from verified backups per a backup and disaster recovery playbook, and executing regulator and customer communications within statutory windows.

Conclusion: Implementing a tested ransomware recovery plan and backup and disaster recovery playbook reduces downtime and regulatory risk for NJ and NY organizations. If you need hands-on support with monitoring, threat hunting, or senior-engineer-led recovery, learn about our services or request a demo, and contact us or visit contact us for an initial assessment.